Why qualitative components are essential for well-rounded insights

Our very first blog post (from our friend and colleague Jessica Broome from Southpaw Insights) was about the complementary combination of qualitative and quantitative research. As Jessica said, “I’d like to call for an end to the segregation of qual and quant, and encourage you to start thinking of the two as peanut butter and bananas: Each is viable on their own, but the two can be even better in combination!”

We learned again this year about the essential benefit of qualitative research in understanding the meaning, reasoning, explanations and insights behind the numbers. In addition to asking people to rate something with numbers, qualitative context adds so much necessary context for those ratings as well as a way to explain their answers.

We ran a user experience test with a website, an app and a physical device, capturing numeric ratings as well as verbatim feedback along the way. When it came time to crunch the numbers, every 1-10 scale and 1-5 star rating settled somewhere in the middle, when averaged.

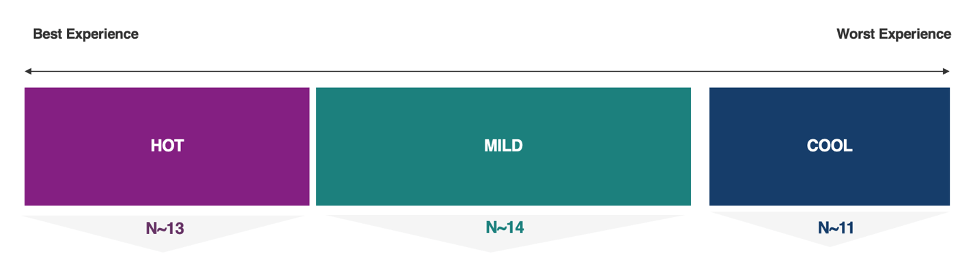

When we looked at the data set, what we found was a polarized audience, some having very positive experiences and feeling that the product made their life better while others were having a very frustrating experience and wanting to return the device.

Not only were they polarized as a group, but as we dug further into the data, we found individuals who gave what might seem like conflicting answers. A user said they would be very likely to purchase the product at the same time that they gave a 2 out of 5-star rating as if writing a review for others. Similarly, a participant who gave high scores and positive feedback overall, answered that he would return the product if he had paid for it and it was a free-trial opportunity.

It was essential to use the participant’s qualitative feedback both from their 1-on-1 interviews and their posts on our subsequent diary study to understand the full picture of what was impacting their experiences.

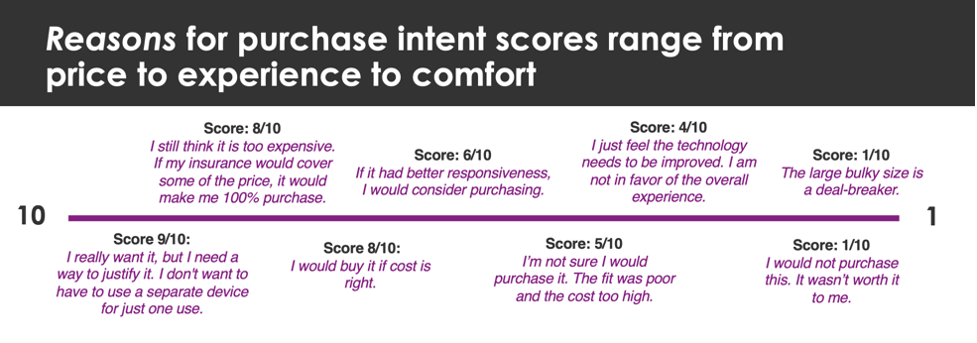

But the value of qualitative played an even more important role when we peeled back another layer. Numbers mean different things to different people. To some a “9 out of 10” means they are completely happy but are tough graders and unwilling to give anything a “10”. Others can give a 9 when they are pretty much satisfied, but point out that there is a big caveat to that 9; it’s only a positive rating IF the product/experience is improved in a meaningful way.

In the end we took all the data points and conversations we had at our disposal to create sub-groups of similarly minded people so that we could give our client a fuller picture – the context behind the scores. This gave the client a window into what types of users might not have the best experience with their product and therefore direction to drive communication (or further research) for finding the right customers. It also provided direction for product development to improve the experience for the right customers.

It helped them understand that a user who wanted to return the product despite a positive experience simply felt like he didn’t need them at the cost he would have paid. And a user who would definitely buy the product but gave a low star rating wanted to warn others about things that could be improved, but the product improved her life so much that she was likely to buy.

For our quant-forward friends out there who are hesitant to add qualitative learnings, be sure at the very least to ask open-ended ‘why’ questions after each rating scale so that you’re getting a peak into participants’ reasoning, caveats, and rationale for their numeric responses.

Another great way to get explanatory qualitative to compliment your quantitative, is to run a 1-day Booth™ Insights with your survey respondents/customer base. This methodology enables quantitative participants to log on and explain their answers to qualitative experts who can then synthesize the feedback and provide depth to the numbers. If you’d like to find out more about setting up your own Booth, reach out HERE.